This article documents a Master 1 student project completed in 2023–2024, written from the perspective of December 2024. It provides an honest technical retrospective including architecture decisions, implementation challenges, technical debt, and lessons learned.

Written in December 2024

Team: 4 students (1× Rust backend, 2× infra/Kubernetes, 1× frontend/docs (me) )

Effective Duration: ≈9 months shared with courses and other projects

Current Status: Functional Proof-of-Concept in local demo, not production-maintained

1. The Real Problem We Wanted to Solve

In most organizations using Harbor as a private registry:

- Developers (or CI pipelines) push images directly

- Built-in vulnerability scanning (Trivy or Clair) often triggers after the push

- There’s generally no systematic static malware detection

- Dynamic (runtime) analysis is virtually non-existent before admission

- There’s no mandatory human checkpoint for critical images

- Rejections aren’t centrally tracked in an exploitable way

Our objectives:

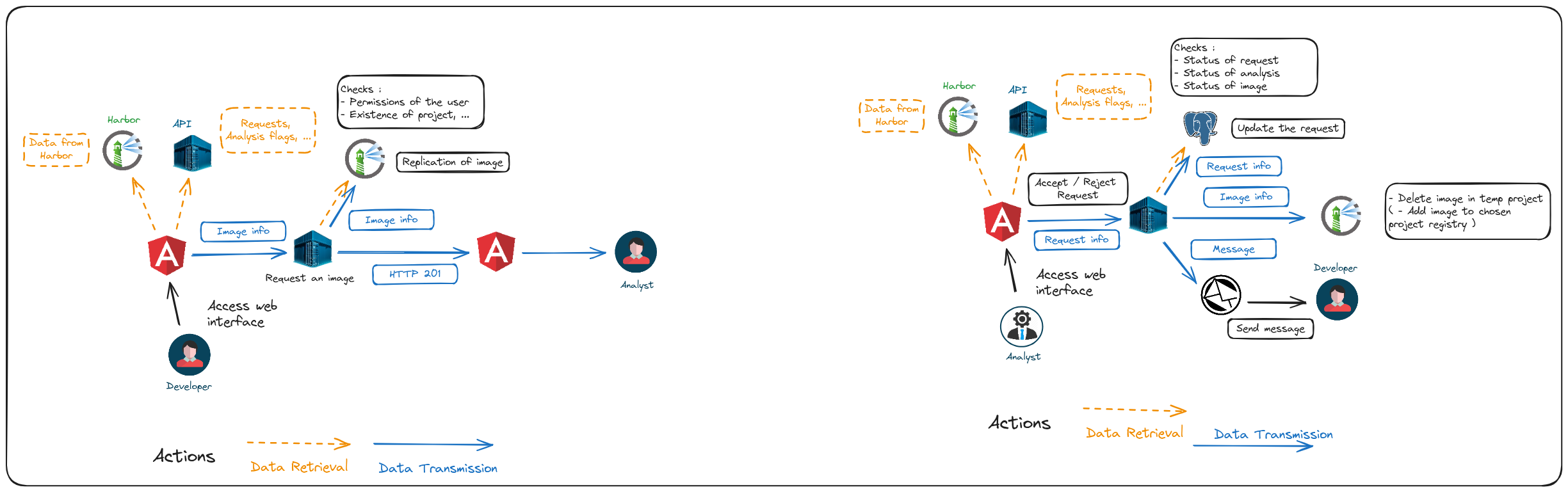

- Enforce explicit request + human approval before admission to sensitive projects

- Stack multiple analysis layers: vulnerabilities + static malware + runtime behavior

- Centralize results and decisions in the existing Harbor interface

- Keep the system light enough to remain realistic in a student context

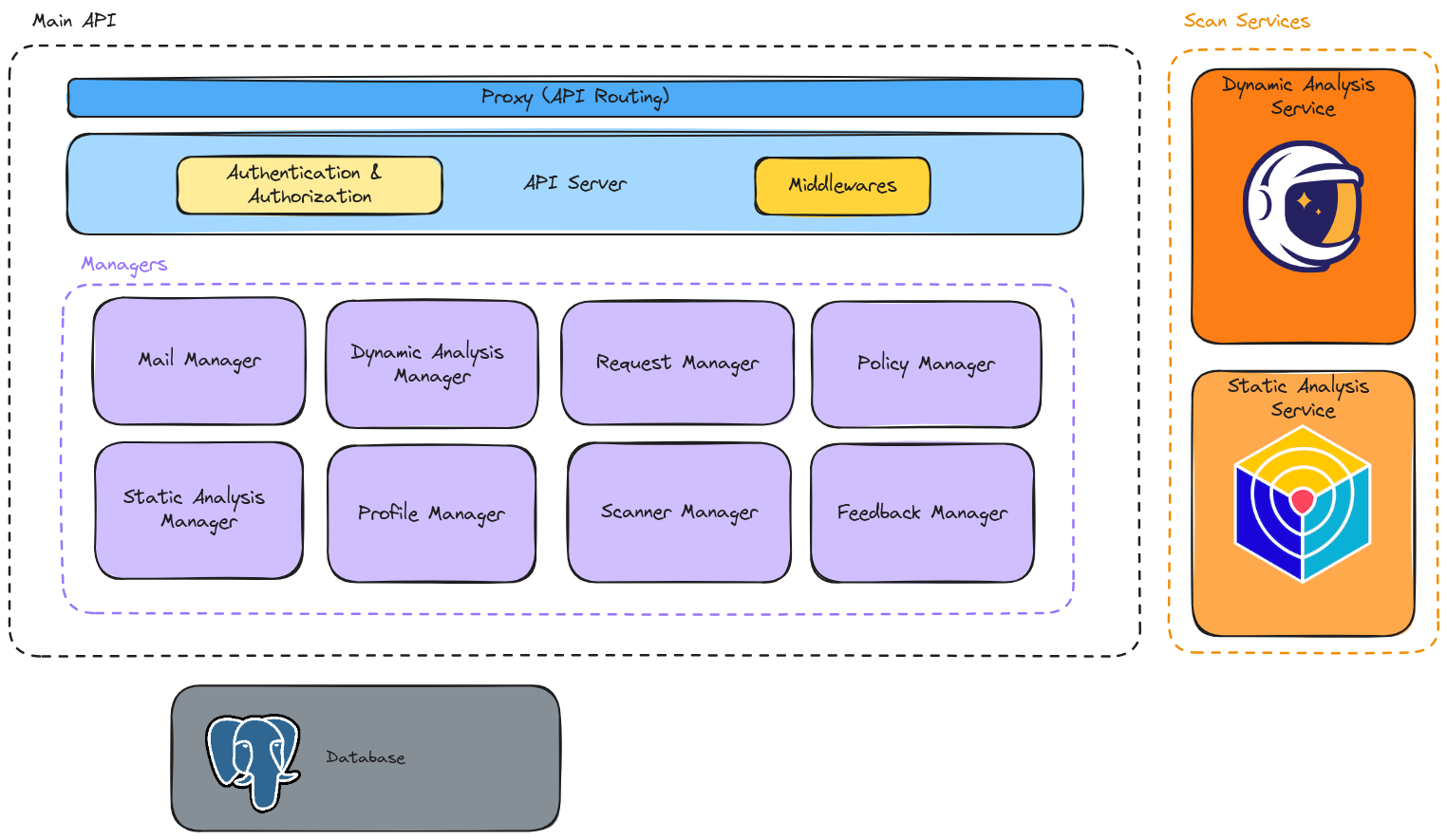

2. Static Architecture

3. Detailed Workflow (user)

4. Notable Implementation Points

Mandatory Image Import into K3s

YaraHunter only scans what’s locally present → manual docker save | sudo k3s ctr images import - required.

Attempted automation via sidecar crictl pull → abandoned (auth and permission issues).

Flag Normalization

Single flags table:

- type (enum)

- vuln: detected vulnerability (CVE, dependency, config, etc.)

- malware: malware / IOC detection

- dynamic: dynamic analysis result (sandbox, behavior)

- policy: rule violation (compliance, access, secrets, etc.)

- other: generic category

Allowed values:

vuln|malware|dynamic|policy|other

- severity (low/medium/high/critical)

Standardized severity level:

- 🟢

low - 🟠

medium - 🔴

high - ⚫

critical

- details (JSONB)

Flexible and conventionally typed data according to type.

Examples of useful keys (case-dependent):

{

"title": "string",

"description": "string",

"source": "string",

"confidence": 0.0,

"timestamp": "2026-02-24T12:34:56Z",

"cve": "CVE-2026-12345",

"cwe": "CWE-79",

"package": "string",

"version": "string",

"fix_version": "string",

"ioc": {

"hash": "string",

"domain": "string",

"ip": "string",

"url": "string"

},

"policy_id": "string",

"rule": "string",

"control": "string",

"evidence": [

{

"type": "log|snippet|stacktrace|file|command|http",

"summary": "string",

"data": "string",

"path": "string",

"line_start": 0,

"line_end": 0,

"timestamp": "2026-02-24T12:34:56Z"

}

],

"recommendation": "string",

"references": ["https://example.com/reference-1", "https://example.com/reference-2"]

}

- raw_path (string)

Optional path to the raw artifact (e.g., on PVC) if needed:

- complete logs

- sandbox dump

- suspicious file

- original scanner report

Example:

/pvc/scans/2026-02-24/job-123/report.json/pvc/artifacts/sample-abc123.bin

Harbor Scan Polling

Tokio loop with interval(5s) + max counter → configurable timeout via environment variable.

YaraHunter Parsing

Patched binary to write structured JSON to /output/report.json → mounted via PVC (persistent volume claim) → read by API.

Image Status

Late refactor: moved status from images table to static_analyses for better normalization.

5. Structural Limitations & Technical Debt (December 2024 Status)

- API completely open (no authentication) → critical if exposed

- Entire workflow is synchronous in initial request → no queue

- Dynamic analysis service never completed → mocked responses

- Strong dependency on mounting

~/.kube/config→ impossible multi-cluster without rework - No notifications (email / Slack / webhook) after decision

- Frontend breaks as soon as Harbor upgrades beyond v2.9

- Tests: only unit tests on domain (~80 cases) → zero integration / E2E tests

- No monitoring (limited structured logs, zero Prometheus metrics)

6. What We Would Do Radically Differently Today

- Never patch the Harbor frontend → prefer webhooks + dedicated UI or plugin

- Use Kyverno or OPA Gatekeeper for native Kubernetes admission policies

- Integrate Cosign + Fulcio + Rekor (Sigstore) for signing & SLSA attestations from the start

- Orchestrate scans with Argo Workflows or Tekton Pipelines (visual + retry + parallelism)

- Add Prometheus + Loki / Grafana from day one

- Migrate to axum instead of Actix-web (better maintained and more ergonomic)

- Make malware scanner serverless (Keda / Knative) for scale-to-zero

- Provide a Kubernetes CRD for admission requests (operator pattern)

7. Conclusion – Real Project Value

The code produced is not industrializable as-is: it’s an ambitious POC with significant technical debt.

But the project was extremely formative:

- Understanding Harbor’s concrete limitations in depth

- Realizing how crucial async and queues are in supply-chain security workflows

- Learning that the “human approval gate” often remains more powerful and flexible than 100% automatic blocking

- Discovering the pitfalls of patching an open-source monolith (especially the frontend)

- Working with Rust in realistic conditions, Kubernetes Jobs, reverse-engineering third-party APIs, parsing unstructured reports

- Experiencing the difficulty of coordinating 4 people on a distributed project with academic deadlines

If we were to propose a serious follow-up today, we’d start with:

- Harbor + webhooks

- Kyverno / Gatekeeper for admission policies

- Argo Workflows for scan orchestration

- Lightweight dedicated UI (or integration in Backstage / Port)

- Complete Sigstore for provenance

But for an end-of-Master-1 project, we’re quite proud of the result: a working demo that impresses juries and taught us enormously about container security and distributed systems architecture.

Links (unmaintained archives):

Technical questions welcome (DB schema, Rust extracts, YaraHunter patch, etc.).

Happy reading and successful security projects!